What Are VLA Models?

Vision-Language-Action (VLA) Models are next-gen AI systems that merge the comprehension of the images with language understanding and then co-relate them with the real-world physically correct action. They resemble a smart robot’s brain that has the ability to watch, listen and do tasks. Unlike question-based interaction models which are limited to answering questions, these models are capable of performing physical tasks such as grasping an object, moving in an area, or interacting with the environment.

Reasons for the Popularity of VLA Models

VLA Models have become the talk of the town since the world is heading towards AI capable of performing real work instead of just generating text. The companies’ demand is for robots which can naturally follow human instructions and complete tasks without the need for manual programming. These models made robots more independent, versatile, and practical in the daily lives of which can be considered as homes, warehouses, hospitals, and offices. The dual understanding ability of the environment and human instruction makes them the perfect candidates for the future of robotics.

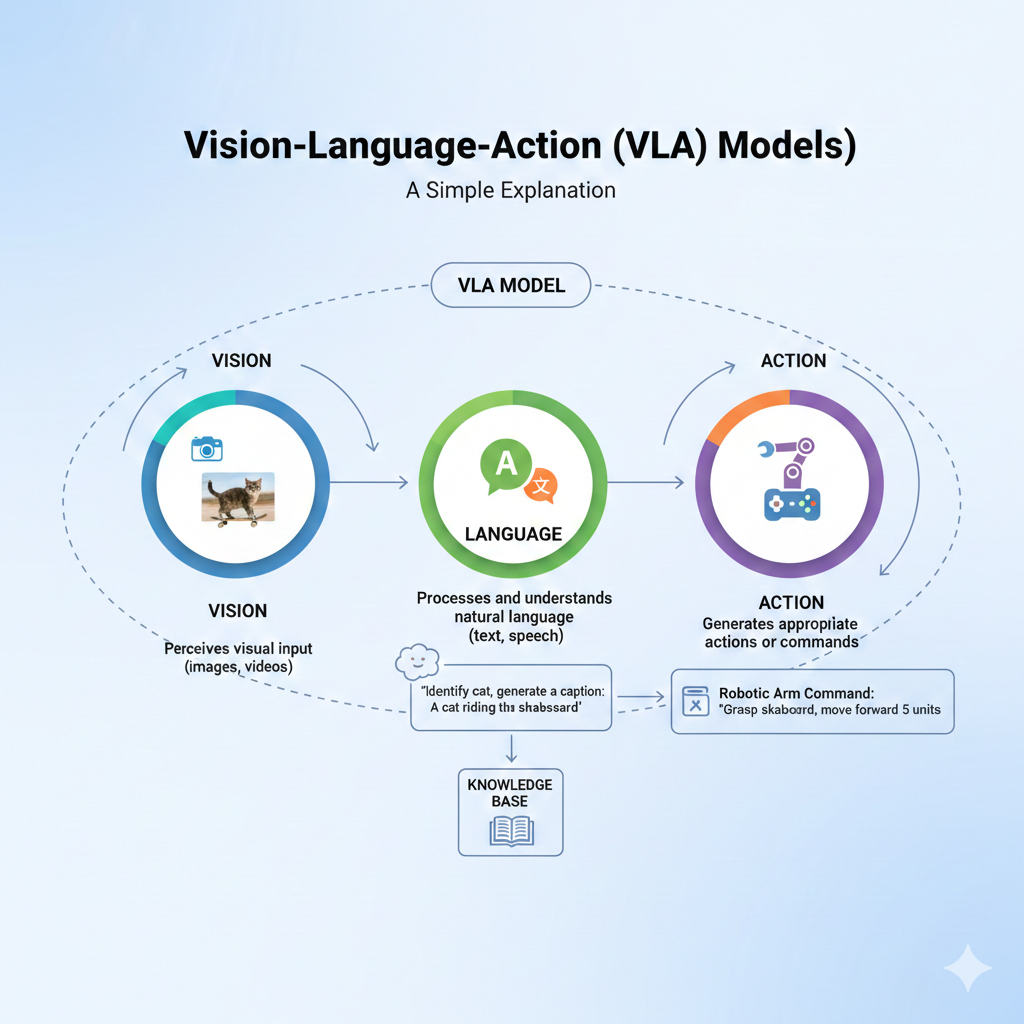

Working Mechanism of VLA Models

VLA Models are functioning through the inclusion of three main attributes: vision, language, and action. Each trait provides the model with the ability to make intelligent decisions and execute the tasks in a safe and accurate manner. Vision enables the model to perceive the surrounding environment, language helps the model to understand the given instructions, and action provides the model with the physical execution of the given task. The combination of the three, therefore, gives limitless possibilities to the machine to be capable of accomplishing complicated tasks in volatile scenarios.

Vision: Understanding the Environment

The vision component of the model facilitates it to grasp the happenings around it. With the use of cameras, the model can recognize objects, people, geometrical figures, movements, and can even assess the area of a room. This visual perception conveys the robot the sense of the location of the objects and their distance from it. For instance, the model can scan a table, identify a cup, and understand the cup is placed in the middle of the table.

Language: Understanding Human Instructions

The language segment of the model assists it in comprehending the natural human orders. It has the ability of deciphering spoken or written instructions such as “Pick up the cup,” “Go to the kitchen,” or “Help me clean the table.” There is absolutely no requirement of technical commands or programming. The model understands the simplest of daily languages, thus making it easy for people to talk with robots as they talk with other people.

Action: Performing Real-World Tasks

The model’s action component is what decides the next move after interpreting the scene and the command. The component determines the appropriate movement, how it can be conducted safely, and if the component is able to make an adjustment in case something unexpected occurs. Through this resource, it is possible for the device to execute picking tasks, it is able to move within the area of a room, open doors, and overcome obstacles. As time goes by, the model becomes more proficient in smoothly carrying out tasks and achieving higher levels of accuracy.

Real-Life Uses of VLA Models

The VLA Models utilization is not limited to a few sectors but they have extended their roots deeply in the numerous fields. The VLA model-powered robots in the domestic industry serve the purpose of cleaning the house, sorting the items, and carrying the heavy objects for you. Their role in factories and warehouses is to propel the machines to perform the task of goods-moving, managing the shelves, and handling the repetitive jobs while relieving the human workers of these tedious tasks. The robots with VLA models make the lives of senior people easier and safer, deliver things to patients, and staff members assist with routine work in the healthcare sector. In terms of navigation and mobility, these models are a great help to robots and drones to move safely by recognizing obstacles and surroundings.

Why VLA Models Matter

VLA Models have a significant role in the development of AI making it a step closer to a real assistant working in the physical world. They reduce the need for technical skills, as humans can speak naturally and the robot can understand. They make the automation process safer and more flexible through their understanding of unpredictable environments. These models have the capability of performing multiple different tasks with the same system, thus making them a great potential for the efficient running of homes, industries, hospitals, and development of smart cities.

Future of VLA Models

VLA Models are expected to be the reason for the development of the next generation of home robots, creation of fully autonomous service robots as well as machines that can very quickly learn new tasks. Besides that, their impact will be felt on drone navigation, delivery systems, and self-driving technology. The partnership between humans and robots will become more efficient as the latter will be able to understand instructions more clearly and get adapted to their surroundings. VLA Models are the closest step to general-purpose robots capable of thousands of tasks in our daily lives.